Untangling conflicting analytics across newsroom systems

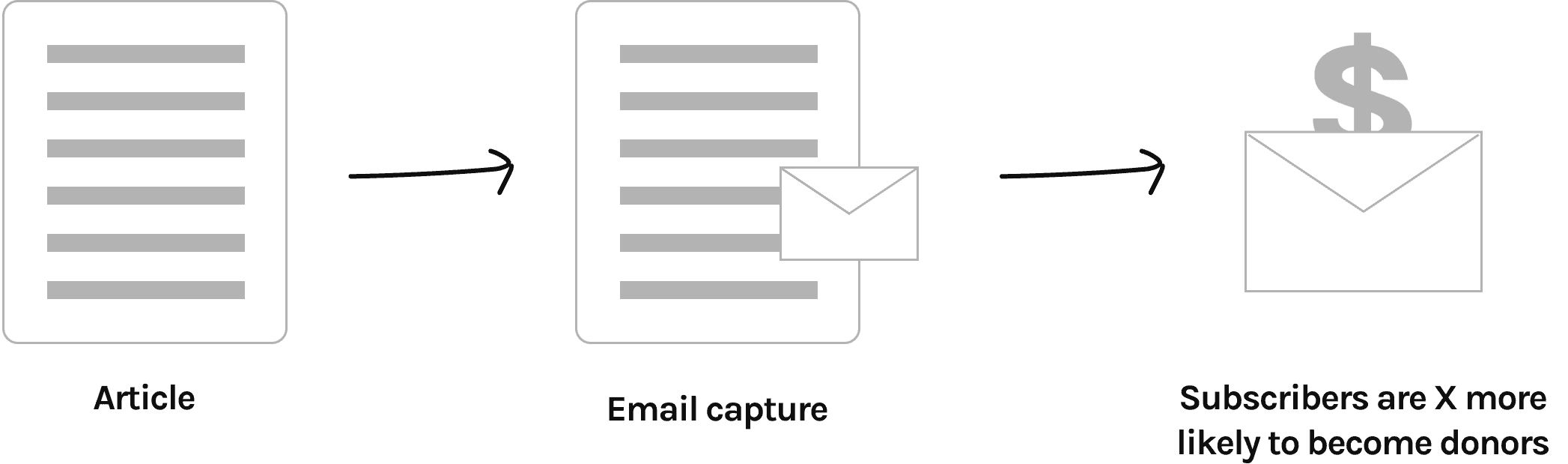

As the sole designer, I designed the email wall interface, configured analytics tracking, and analyzed performance data to drive optimizations that resulted in 30% list growth.

Problem

We identified a consistent 20–30% discrepancy between email acquisition and donation conversion data across our analytics tools and our CRM payment and email platform. This made it difficult to trust experiment results and skewed how the newsroom evaluated which content was performing best.

Why it mattered

Teams were making editorial and growth decisions based on metrics that didn’t agree, which created confusion and risked optimizing for the wrong signals.

My role

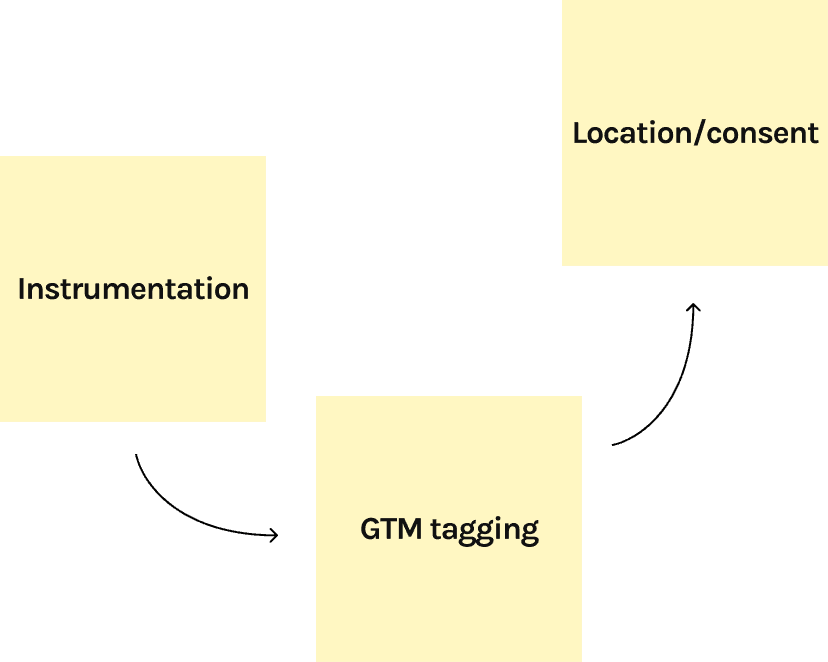

I helped frame the problem, followed the discrepancies over time, and worked with our Chief of Product and vendors to understand where the numbers were breaking down.

Outcome

The gap was reduced in some areas, but not fully eliminated. More importantly, leadership adjusted how results were interpreted and where trust was placed in metrics.

What I learned

Measurement is a product risk

Perfect attribution is unrealistic

Designers need systems literacy, not ownership

Clear documentation prevents circular debates

Trust in data affects roadmap quality

What changed

While the gap wasn’t fully resolved, we aligned on which metrics were reliable for decision-making, documented known limitations, and adjusted how experiment results were interpreted.